I think he is on to something here. I've believed for awhile that the best way to learn to use agents and llm's for writing code it to focus more changing the spec instead of modifying mistakes in the code. This does work for smaller projects but can be problematic to say the least. This is a realistic solution to the problem.

Code implementation clarifies and communicates intent. I could stop there and walk out of the room. I missed this with whenwords.

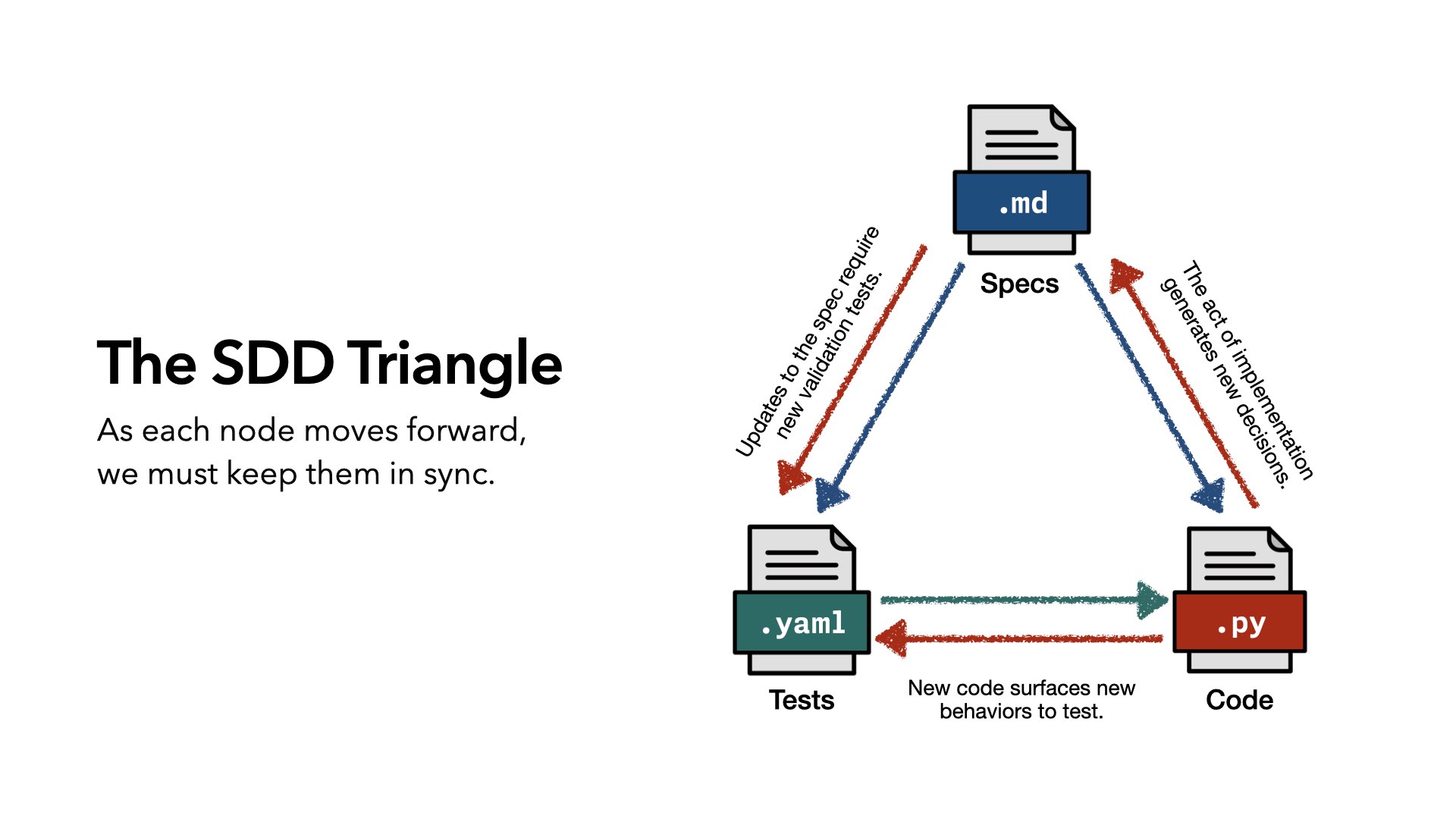

The job is to keep specs, code, and tests in sync as they move forward. The system for managing that has to stay simple. If it creates developer mental overhead, it just moves the problem somewhere else.

The act of writing code improves the spec and the tests. Just like software doesn’t truly work until it meets the real world, a spec doesn’t truly work until it’s implemented.

No-code libraries are toys because they are unproven.

Even if you aren’t the one making decisions during implementation, decisions are being made. We should leverage LLMs to extract and structure those decisions.

And finally: we’ve been here before. The answer then was process. The answer now is also process. And just as we leverage cloud compute to enable CI/CD for agile, we should leverage LLMs to build something lightweight enough that we can fit in our heads, doesn’t slow us down, and helps us make sense of our software.