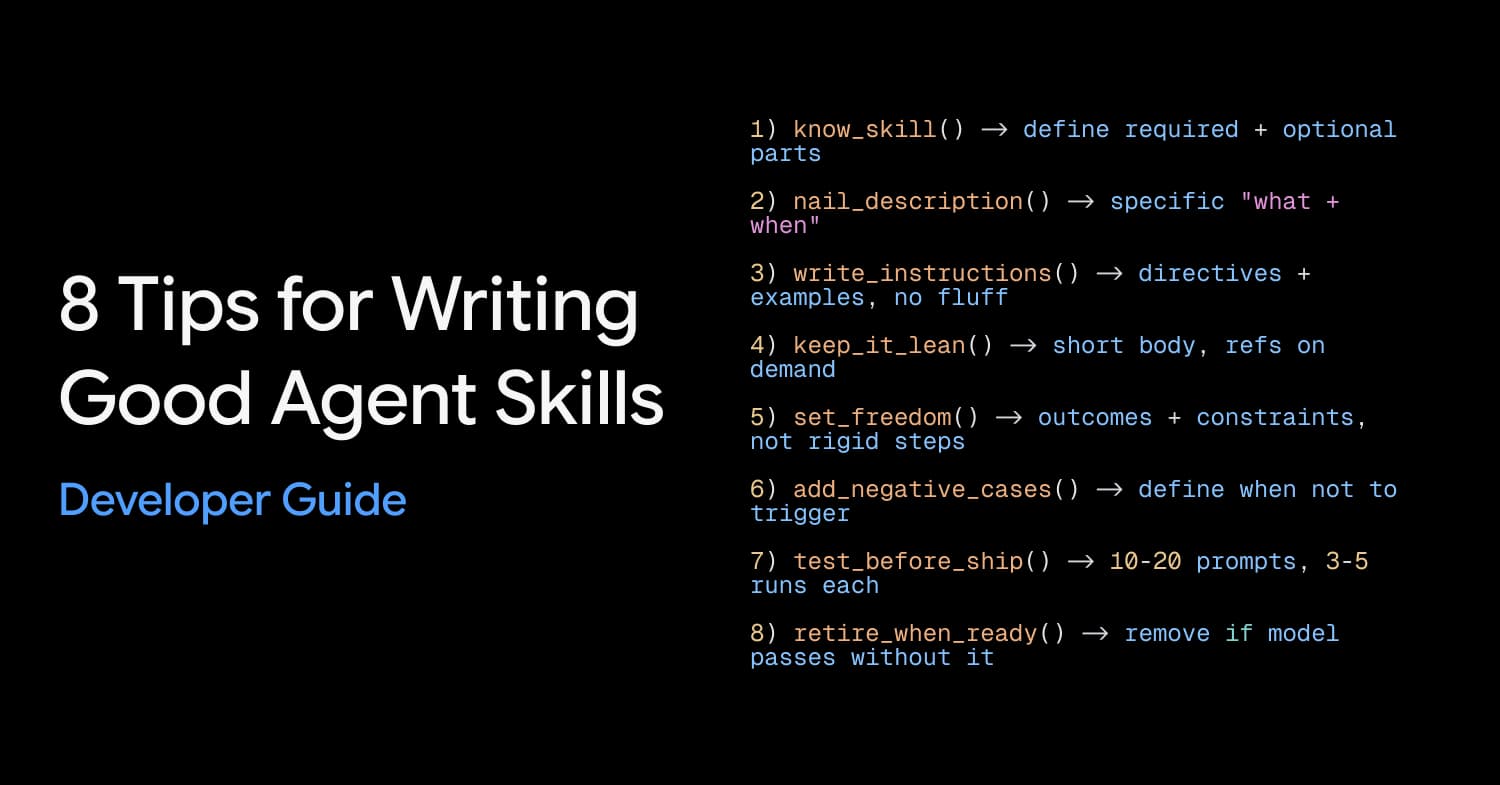

Prompts are technical debt too

In this sense, prompts are a worse form of technical debt than code. When technical debt blows up, it usually causes errors or a tangible slowdown as you try to understand the code. Prompts will decay silently. Also, even janky code tends to be relatively stable when untouched, but every single model upgrade could turn a functional prompt into a non-functional one.

seangoedecke.com